Typing a prompt feels like nothing.

No smoke. No engine noise. No truck backing into your driveway with a load of coal.

But the second you ask AI to summarize a contract, write a sales email, or turn your dog into a medieval king, something very physical happens. A data center wakes up. Chips get busy. Heat explodes. Cooling systems kick in. Somewhere, a utility planner sighs.

Globally, data centers used about 415 TWh of electricity in 2024, or roughly 1.5% of world electricity use, and the IEA projects that figure could reach around 945 TWh by 2030. In 2025 alone, the IEA says data-center electricity demand rose 17%, with AI-focused centers growing even faster.

The weird little contradiction at the heart of modern AI: the product feels digital, but the cost is deeply industrial.

⚡ “The cloud is a beautiful word for a warehouse full of electronics.”

Welcome to 1000whats — where energy jargon comes to die, and useful knowledge comes to life.

What is AI’s energy problem?

AI’s energy problem is simple to say and easy to miss:

AI’s energy problem is the growing amount of electricity, cooling, water, and grid infrastructure needed to train and run AI systems at scale.

Imagine you ask a chatbot a simple question.

You feel as though you have done almost nothing. In one sense, you have.

In another sense, you have just pressed a button on an industrial system somewhere else. Chips begin switching furiously. They heat up. Fans and chillers work to get rid of that heat. Power conversion equipment keeps the whole thing alive. And because millions of other people are doing the same thing, the system cannot nap between questions. It has to sit there, awake, humming, ready. That is the problem.

Not one dramatic burst, but endless small bursts that add up to a giant appetite.

Google’s own explanation of AI inference makes this crystal clear: the real footprint includes not just the AI chips doing the work, but also idle capacity kept ready for traffic spikes, CPUs and RAM, and data-center overhead like cooling and power distribution.

⚡ “One prompt is small. Civilization is what happens when you multiply small things by a few billion.”

Why does AI’s energy problem exist?

Because AI is built on three habits humans are terrible at resisting: bigger models, faster answers, and more usage.

In practice, the main drivers are these:

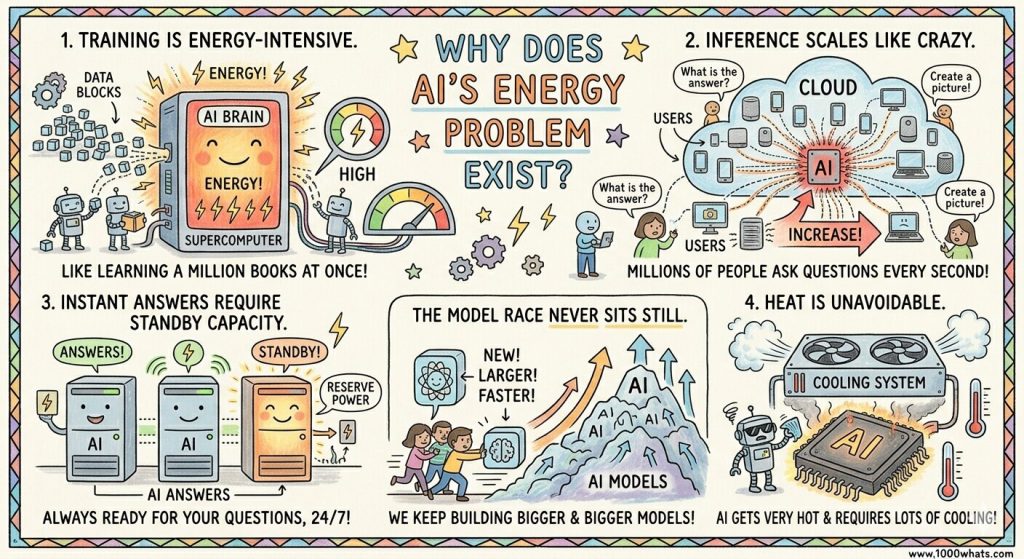

- Training is energy-intensive. Teaching a large model means pushing massive amounts of data through energy-hungry hardware for long periods. MIT notes that training generative AI models can demand huge amounts of electricity and put pressure on the grid.

- Inference scales like crazy. Once the model is live, every question, image request, summary, and rewrite costs energy. Most people think the fireworks are in training. But at scale, the real monster can be inference, because it happens over and over and over again.

- Instant answers require standby capacity. AI systems keep extra machines ready so they can respond fast and survive traffic spikes or failures. That reliability is wonderful for users and lousy for efficiency.

- Heat is unavoidable. High-performance chips run hot, which means more cooling equipment, more overhead, and often more water use.

- The model race never sits still. Newer models arrive constantly, and as they become larger or more complex, inference can become more energy-intensive too.

From a market perspective, this is the trap: efficiency improvements are real, but demand grows even faster. That is why “AI is getting more efficient” and “AI is becoming an energy headache” can both be true at the same time.

⚡ “AI doesn’t strain the grid because one prompt is enormous. It strains the grid because billions of small prompts never go to sleep.”

What actually consumes the energy, water, and everything else?

Let’s open the box.

When people hear “AI uses a lot of energy,” many picture some giant glowing brain in the desert, humming ominously like a villain’s lair.

That is not quite right.

What you really have is a data center: a physical building packed with computing machines, storage drives, network gear, and support equipment. AWS defines a data center as a physical location that stores computing machines and related hardware, and says it contains the compute, storage, network, and support infrastructure that digital services need.

So if you have never heard of a data center, think of it this way:

A data center is a factory for digital work.

Instead of making shoes or steel, it makes searches, streams, emails, AI replies, and every other little miracle you now expect before your coffee gets cold.

⚡ “AI does not live in the sky. It lives in buildings full of machines that get hot.”

First: where does the electricity go?

Start with the obvious part: the computers.

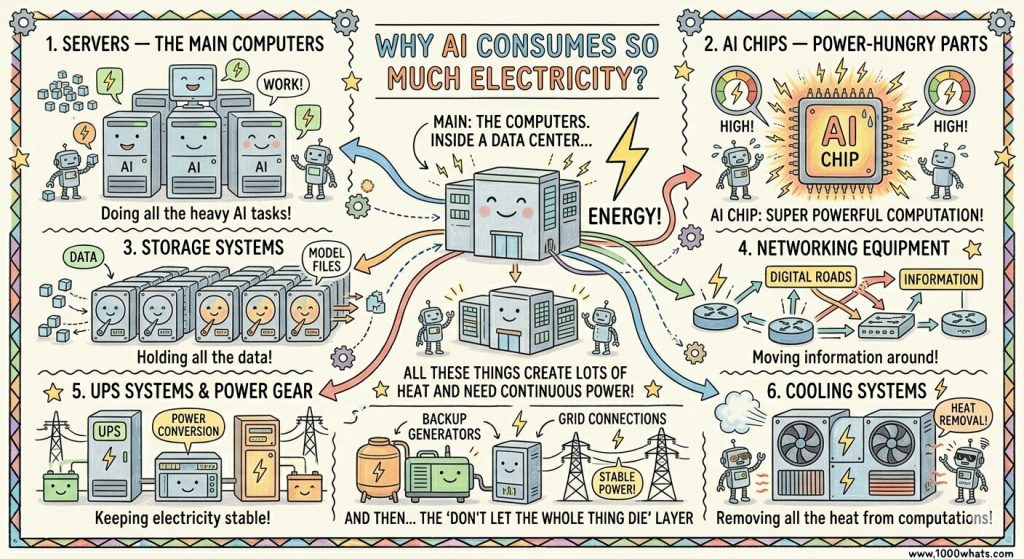

When you ask AI a question, the answer does not float out of the universe like wisdom from a mountain. It is produced by machines doing a huge number of calculations very quickly. Inside a data center, electricity is used by:

- Servers — the main computers doing the work

- AI chips — the especially power-hungry parts built for heavy computation

- Storage systems — the machines holding data and model files

- Networking equipment — the digital roads moving information around

- UPS systems and power conversion gear — the equipment that keeps electricity stable

- Cooling systems — the machinery that removes the heat all of the above create

- Backup generators and grid connections — the “don’t let the whole thing die” layer

That last point matters, because people often imagine all the electricity goes into “thinking.”

No. A lot of it goes into surviving the consequences of thinking.

The chips do the math.

The math makes heat.

Then more machines are needed to get rid of that heat.

That is the basic trick.

Why does it get so hot?

Because calculation is physical.

Every time a chip flips billions or trillions of tiny electronic switches, some energy ends up as heat. Not because the engineers were careless. Because that is how physics sends a receipt.

The IEA says accelerated servers are a major reason data-center electricity demand is rising, and that cooling and other infrastructure together account for about 20% of the net increase in global data-center electricity consumption in its base case to 2030.

⚡ “The machine does not merely answer your question. It also has to keep itself from cooking.”

What does “cooling” actually mean?

Cooling is just the art of carrying heat away.

Imagine your laptop getting warm on your knees. Now imagine a whole building filled with much more powerful machines, packed closely together, all doing work at once. Suddenly your warm laptop has become a thermal management problem with a parking lot.

Data centers use things like:

- Fans to move hot air away

- Air conditioning or chillers to cool the air

- Liquid cooling systems to pull heat away more directly

- Cooling towers in some facilities, where water helps dump heat to the outside world

So when we say AI uses electricity, part of what we mean is:

it uses electricity to run computers, and then more electricity to stop those computers from melting into expensive toast.

Why does water come into this?

Because water is very good at moving heat.

Some data centers use water directly in cooling systems. Others use electricity from power plants that themselves consume water. That gives AI a double water story: on-site water use and upstream water use.

Berkeley Lab reports direct U.S. data-center water consumption rose from 21.2 billion liters in 2014 to 66 billion liters in 2023. The same report estimates that the indirect water footprint tied to the electricity used by U.S. data centers was nearly 800 billion liters in 2023.

A simple way to picture it is this:

- electricity runs the machines

- machines create heat

- cooling removes the heat

- water often helps with the cooling, either directly or indirectly

That is the mystery, now demystified.

Why are AI data centers different from ordinary computing?

Because AI likes very intense hardware.

A normal office computer is like a bicycle.

A big AI server is more like a drag racer.

The IEA says electricity use in accelerated servers, mainly driven by AI adoption, is projected to grow by about 30% annually in its base case to 2030. And in its 2026 update, it says that by 2027 a single advanced server rack, about the size of a large refrigerator, could have peak power demand equal to 65 households.

Now you can see the problem without jargon.

This is not “a few computers in a room.”

This is fridge-sized boxes with the appetite of a neighborhood.

⚡ “Once a single rack starts drinking power like 65 homes, you are no longer talking about gadgets. You are talking about infrastructure.”

And what is “the rest”?

The “rest” is everything people forget because it is less glamorous than the AI chip.

It is the batteries and UPS systems that smooth out power. The backup generators that keep the site alive during outages. The transformers, cables, pumps, power electronics, and cooling hardware that make the entire facility function.

So when people say “AI uses energy,” the honest version is:

AI uses a whole stack of things.

Electricity is the biggest daily input.

Water often helps manage the heat.

And behind both of those sit buildings, hardware, pipes, power systems, and supply chains.

⚡ “The answer on your screen is light. The machine behind it is heavy.”

Why does it keep consuming energy even when you are not using it?

Because fast service requires readiness.

People expect AI to answer right away. Not in ten minutes. Not after a polite wait. Now.

That means providers cannot power everything down between prompts like a shopkeeper turning off the lights at night. They keep a lot of equipment ready, so the system can handle sudden spikes in demand. Google says many public estimates miss this by counting only the active chips and ignoring the broader operating system at scale.

This is a little like a fire station.

Most of the time, the truck is not racing down the street. But it still has to exist, stay maintained, stay fueled, and be ready.

AI infrastructure works the same way. Part of the energy cost is not just the answer itself. It is the price of being prepared to answer instantly.

How much electricity is used by the AI?

Now that the machine is visible, the numbers start to mean something.

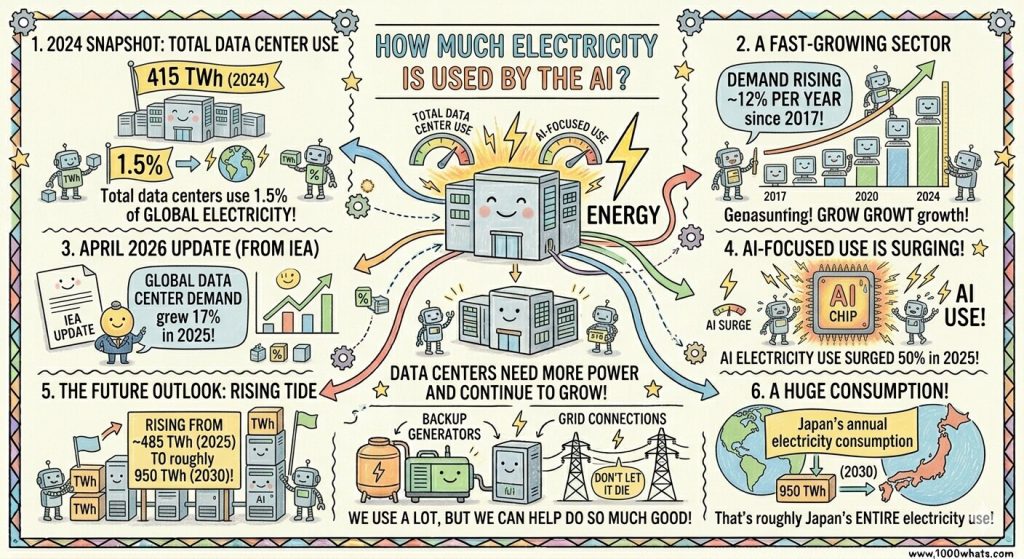

The IEA says data centers accounted for about 415 TWh of electricity use in 2024, equal to around 1.5% of global electricity consumption. It also says that is already a fast-growing sector, with demand rising about 12% per year since 2017.

That is already country-sized.

Not phone-charger sized.

Not “a few office buildings” sized.

Country-sized.

And the story kept accelerating. In its April 2026 update, the IEA said global electricity demand from data centers grew 17% in 2025, while electricity consumption from AI-focused data centers surged 50% in the same year. It now sees total data-center electricity use rising from about 485 TWh in 2025 to roughly 950 TWh in 2030.

To get a picture of how big this is, 950 TWh is not “a lot of server power.” It is roughly the annual electricity consumption of an entire country like Japan. Once you are comparing AI infrastructure to nations instead of gadgets, you are in a different story altogether.

⚡ “The problem is not that one prompt is a bonfire. The problem is that the bonfire never goes out.”

Pros and cons: the honest version

AI is not just an energy villain. It can also help.

Potential upsides

- AI can improve grid operations, industrial efficiency, and energy-system decision-making. The IEA explicitly notes that AI could transform how the energy industry operates at scale.

- Efficiency gains are possible. UNESCO and UCL say smaller task-specific models can cut energy use by up to 90%, and shorter prompts and responses can reduce it by more than 50%.

The downsides

- Data centers are growing fast enough to become a genuine electricity-planning issue.

- Cooling can raise water demand, especially in hot or water-stressed areas.

- Near-term growth will not be powered by clean energy alone. The IEA says renewables meet nearly half of additional data-center electricity demand through 2030, but natural gas and coal together are still expected to meet over 40% of the additional demand in that period.

- Transparency is still weak. OECD has called for better measurement and reporting, and the European Commission has already moved toward mandatory reporting on data-center energy performance and water footprint.

⚡ “The real question is not whether AI uses energy. Of course it does. The question is whether we are building its appetite faster than we are building clean power.”

Why it matters right now

Because this is no longer a future problem.

Data-center electricity demand is accelerating now. The EU is building reporting systems now. Utilities are wrestling with large new loads now. And companies are making location and infrastructure bets now.

In other words, AI’s energy problem is becoming an infrastructure problem.

And infrastructure problems are never solved by slogans. They are solved by hard choices about design, efficiency, transparency, grid investment, and where these facilities get built.

How can AI’s energy problem be reduced?

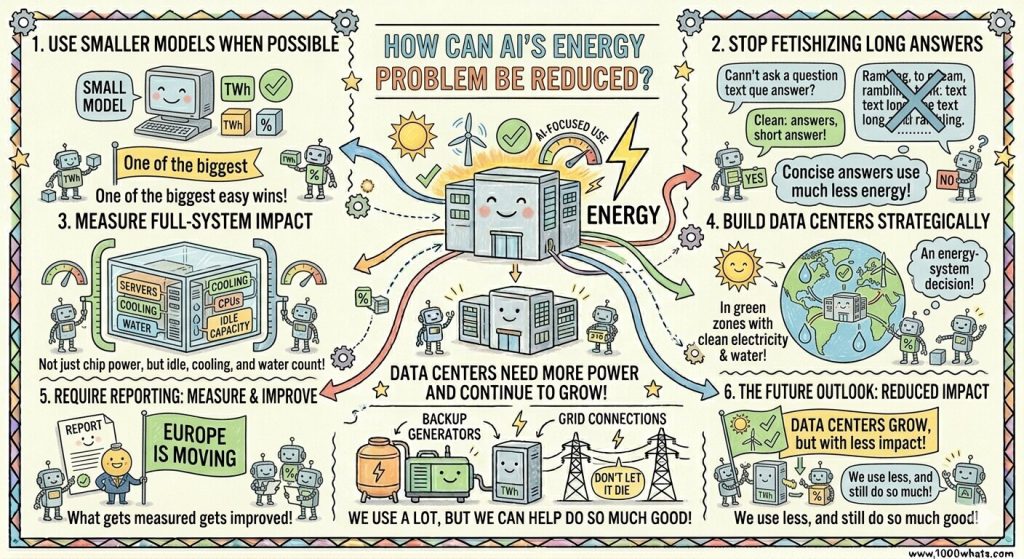

In practice, the smartest moves are not mysterious:

- Use smaller models when a smaller model will do. This is one of the biggest easy wins.

- Stop fetishizing long answers. Shorter prompts and shorter outputs can cut energy use significantly.

- Measure full-system impact, not just chip power. Idle capacity, cooling, CPUs, and water all count.

- Build data centers where clean electricity and water conditions make sense. That is an energy-system decision, not just a tech decision.

- Require reporting. What gets measured gets improved, and Europe is already moving in that direction.

The encouraging part is that efficiency gains are possible. The IEA’s high-efficiency case shows data-center electricity demand in 2035 could be more than 15% lower than its base case, and UNESCO/UCL’s work suggests software and model choices can make an enormous difference.

Final thoughts

AI’s energy problem is not a scandal because computers use electricity.

That would be childish.

The real issue is that we are treating AI like pure magic when it is actually physical infrastructure with a growing appetite. And once you see that, the debate changes. It stops being about whether one chatbot answer is “worth it” and starts being about whether we are designing the whole system intelligently.

My view is simple: AI is worth having, but only if we stop pretending its physical footprint is somebody else’s problem. Once you see the wires, the chillers, the transformers, the water, and the country-sized electricity numbers, the whole story becomes clearer. The magic is still there. But now you know what the trick costs

What do you think: should the industry focus first on cleaner power, smaller models, or mandatory transparency?

Until next time, stay curious! 😎